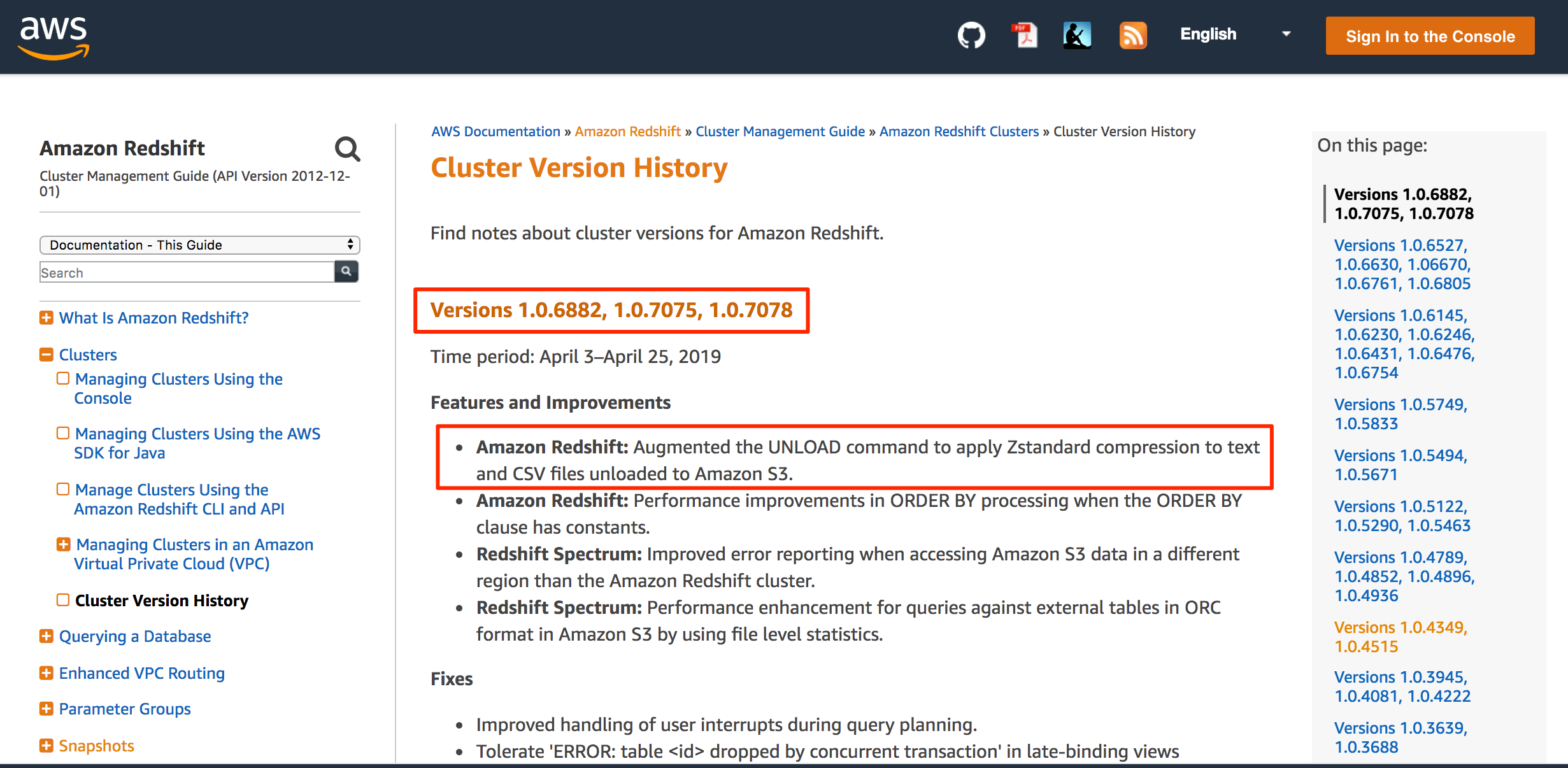

Choose Policies, and then choose Create policy.ģ. Create an IAM role in the account that's using Amazon S3 (RoleA)Ģ. If they're in different Regions, then you must add the REGION parameter to the COPY or UNLOAD command. Note: The following steps assume that the Amazon Redshift cluster and the S3 bucket are in the same Region. For example, if you're using the Parquet data format, your syntax looks like this: copy table_name from 's3://awsexamplebucket/crosscopy1.csv' iam_role 'arn:aws:iam::Amazon_Redshift_Account_ID:role/RoleB,arn:aws:iam::Amazon_S3_Account_ID:role/RoleA format as parquet Resolution Redshift UNLOAD is following that convention (see Redshift manual for UNLOAD. However, there might be some changes in the COPY and UNLOAD command syntax while performing these operations. Note: These steps work regardless of your data format. Unload the text data in either a delimited or fixed-width format (regardless of the data format used while being loaded). We will look at some of the frequently used options in this article. This command provides many options to format the exported data as well as specifying the schema of the data being exported. The syntax of the Unload command is as shown below. Test the cross-account access between RoleA and RoleB. The primary method natively supports by AWS Redshift is the Unload command to export data. Create an IAM role in the account that's using Amazon S3 (RoleA) 1. I've code which extracts data from redshift to S3 UNLOAD (' select sysdate ') TO 's3://test-bucket/adretarget/test. Note: The following steps assume that the Amazon Redshift cluster and the S3 bucket are in the same Region. Create RoleB, an IAM role in the Amazon Redshift account with permissions to assume RoleA.ģ. Redshift unload's file name (1 answer) Closed 5 years ago. Create RoleA, an IAM role in the Amazon S3 account.Ģ.

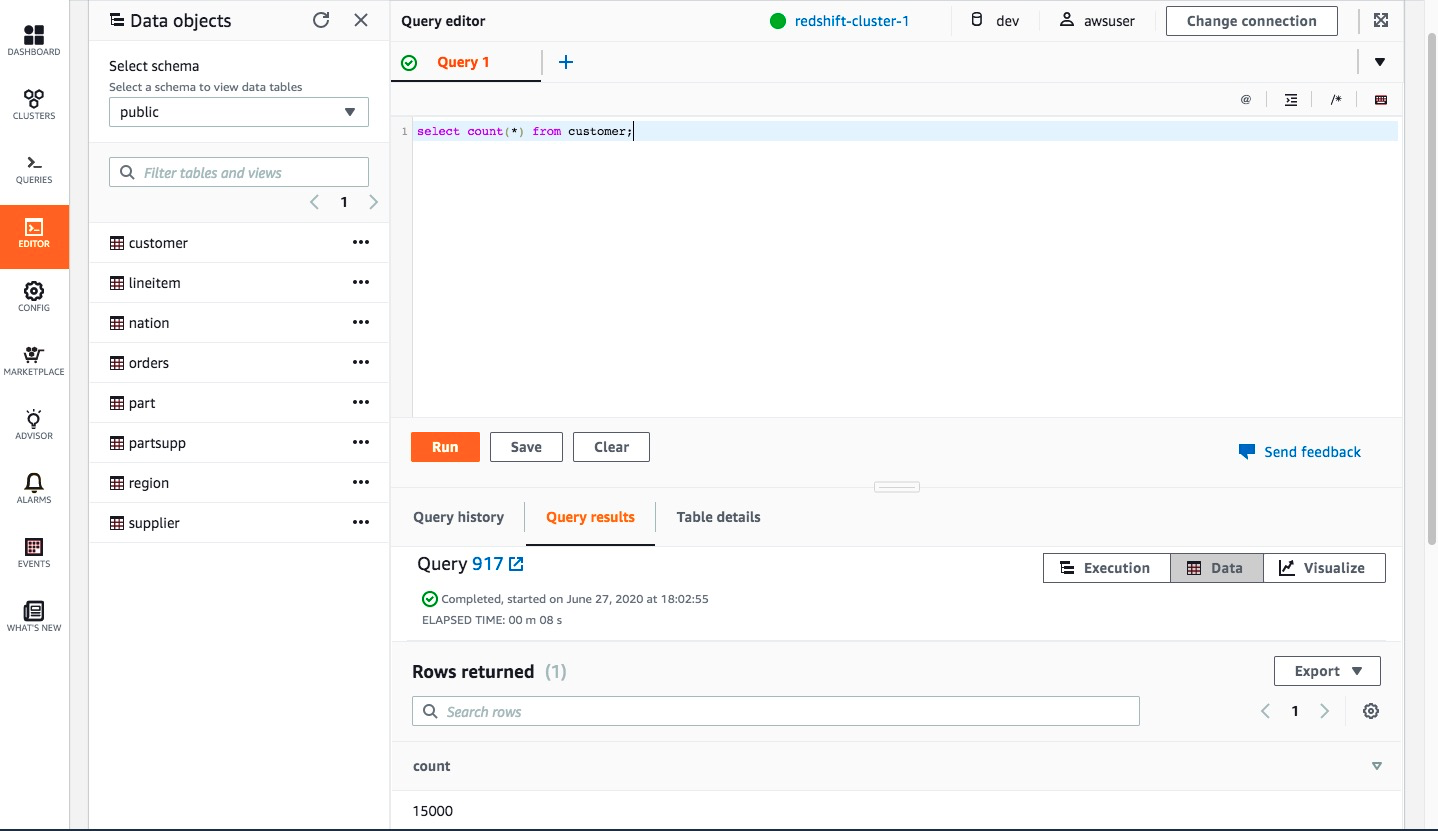

These steps apply to both Redshift Serverless and Redshift provisioned data warehouse:ġ. Redshift users can unload data two main ways: Using the SQL UNLOAD command Downloading the query result from a client UNLOAD SQL The most convenient way of unloading data from Redshift is by using the UNLOAD command in a SQL IDE. Replies appreciated.To access Amazon S3 resources that are in a different account from where Amazon Redshift is in use, perform the following steps.

I am testing the query from a Java application and from DBeaver.ĭo I have a syntax error in my query? Could this be a Redshift bug? Asked on AWS forum. I believe I have followed the official documentation and examples: Assuming the size of the data in the previous example was 20 GB, the following UNLOAD command creates 20 files, each 1 GB in size. To create smaller files, include the MAXFILESIZE parameter. While Copy grabs the data from an Amazon S3 bucket & puts it into a Redshift table, Unload takes the result of a query, and stores the data in Amazon S3. It appears that the extension option is not recognized. If the unload data is larger than 6.2 GB, UNLOAD creates a new file for each 6.2 GB data segment. Redshift Unload command is a great tool that actually compliments the Redshift Copy command by performing exactly the opposite functionality. This command throws an error message: SQL Error : ERROR: syntax error at or near "extension" If the unload data is larger than 6.2 GB, UNLOAD creates a new file for each 6.2 GB data segment. Iam_role 'arn:aws:iam::xxxxxxx:role/my-role' The command I run is: unload ('select * from public.mytable') Redshift Unload command is a great tool that actually compliments the Redshift Copy command by performing exactly the opposite functionality. The CSV extension is useful to allow data files to be opened e.g. I am attempting to unload data from Redshift using the extension parameter to specify a CSV file extension.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed